The research study for the multimodal AI market involved extensive secondary sources, directories, and several journals. Primary sources were mainly industry experts from the core and related industries, preferred multimodal AI solution providers, third-party service providers, consulting service providers, end users, and other commercial enterprises. In-depth interviews were conducted with various primary respondents, including key industry participants and subject matter experts, to obtain and verify critical qualitative and quantitative information, and assess the market’s prospects.

Secondary Research

The market size of companies offering multimodal AI solutions and services was arrived at based on secondary data available through paid and unpaid sources. It was also arrived at by analyzing the product portfolios of major companies and rating the companies based on their performance and quality.

In the secondary research process, various sources were referred to for identifying and collecting information for this study. Secondary sources included annual reports, press releases, and investor presentations of companies; white papers, journals, and certified publications; and articles from recognized authors, directories, and databases. The data was also collected from other secondary sources, such as journals, government websites, blogs, and vendor websites. Additionally, multimodal AI spending of various countries was extracted from the respective sources. Secondary research was mainly used to obtain key information related to the industry’s value chain and supply chain to identify key players based on solutions, services, market classification, and segmentation according to offerings of major players, industry trends related to solutions, services, deployment modes, functionality, applications, verticals, and regions, and key developments from both market- and technology-oriented perspectives.

Primary Research

In the primary research process, various primary sources from both supply and demand sides were interviewed to obtain qualitative and quantitative information on the market. The primary sources from the supply side included various industry experts, including Chief Experience Officers (CXOs); Vice Presidents (VPs); directors from business development, marketing, and multimodal AI expertise; related key executives from multimodal AI solution vendors, SIs, professional service providers, and industry associations; and key opinion leaders.

Primary interviews were conducted to gather insights, such as market statistics, revenue data collected from solutions and services, market breakups, market size estimations, market forecasts, and data triangulation. Primary research also helped understand various trends related to technologies, applications, deployments, and regions. Stakeholders from the demand side, such as Chief Information Officers (CIOs), Chief Technology Officers (CTOs), Chief Strategy Officers (CSOs), and end users using multimodal AI, were interviewed to understand the buyer’s perspective on suppliers, products, service providers, and their current usage of multimodal AI solutions and services, which would impact the overall multimodal AI market

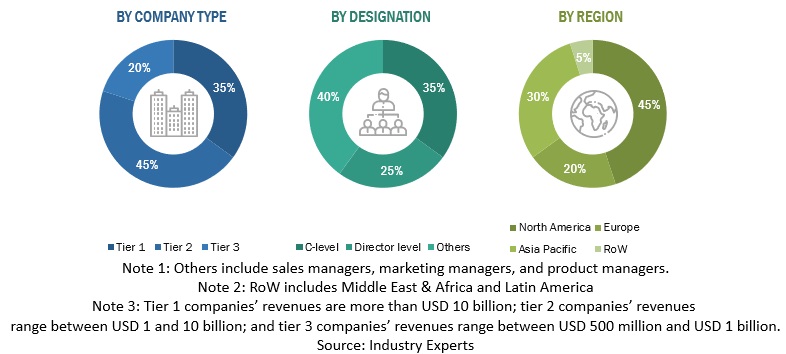

The following is the breakup of primary profiles:

To know about the assumptions considered for the study, download the pdf brochure

Market Size Estimation

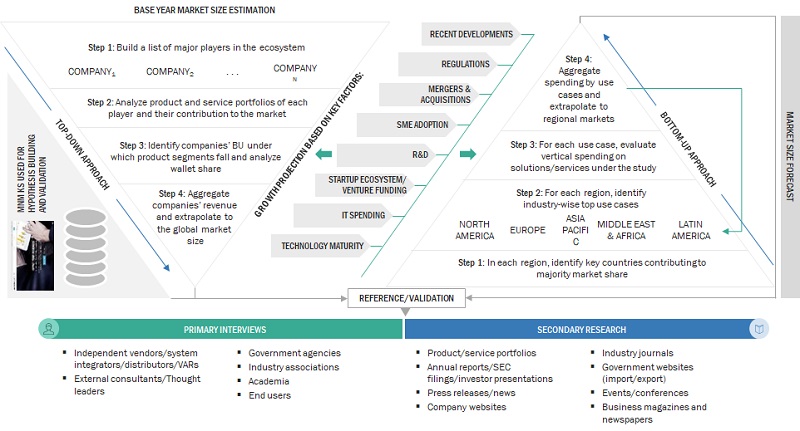

Multiple approaches were adopted for estimating and forecasting the multimodal AI market. The first approach involves estimating the market size by summation of companies’ revenue generated through the sale of solutions and services.

Market Size Estimation Methodology-Top-down approach

In the top-down approach, an exhaustive list of all the vendors offering solutions and services in the Multimodal AI market was prepared. The revenue contribution of the market vendors was estimated through annual reports, press releases, funding, investor presentations, paid databases, and primary interviews. Each vendor’s offerings were evaluated based on the breadth of solutions and services, deployment modes, applications, and verticals. The aggregate of all the companies’ revenue was extrapolated to reach the overall market size. Each subsegment was studied and analyzed for its global market size and regional penetration. The markets were triangulated through both primary and secondary research. The primary procedure included extensive interviews for key insights from industry leaders, such as CIOs, CEOs, VPs, directors, and marketing executives. The market numbers were further triangulated with the existing MarketsandMarkets repository for validation.

Market Size Estimation Methodology-Bottom-up approach

In the bottom-up approach, the adoption rate of multimodal AI solutions and services among different end users in key countries with respect to their regions contributing the most to the market share was identified. For cross-validation, the adoption of multimodal AI solutions and services among industries, along with different use cases with respect to their regions, was identified and extrapolated. Weightage was given to use cases identified in different regions for the market size calculation.

Based on the market numbers, the regional split was determined by primary and secondary sources. The procedure included the analysis of the multimodal AI market’s regional penetration. Based on secondary research, the regional spending on Information and Communications Technology (ICT), socio-economic analysis of each country, strategic vendor analysis of major multimodal AI solution providers, and organic and inorganic business development activities of regional and global players were estimated. With the data triangulation procedure and data validation through primaries, the exact values of the overall multimodal AI market size and segments’ size were determined and confirmed using the study

Top-down and Bottom-up approaches

To know about the assumptions considered for the study, Request for Free Sample Report

Data Triangulation

After arriving at the overall market size using the market size estimation processes as explained above, the market was split into several segments and subsegments. To complete the overall market engineering process and arrive at the exact statistics of each market segment and subsegment, data triangulation and market breakup procedures were employed, wherever applicable. The overall market size was then used in the top-down procedure to estimate the size of other individual markets via percentage splits of the market segmentation.

Market Definition

According to Twelve Labs, Multimodal AI is a rapidly evolving field that focuses on understanding and leveraging multiple modalities to build more comprehensive and accurate AI models.

According to Aimesoft, Multimodal AI is a new AI paradigm, in which various data types (image, text, speech, numerical data) are combined with multiple intelligence processing algorithms to achieve higher performances. Multimodal AI often outperforms single modal AI in many real-world problems.

Stakeholders

-

Multimodal AI solution vendors

-

Managed service providers

-

Support and maintenance service providers

-

System Integrators (SIs)/migration service providers

-

Value-added resellers (VARs) and distributors

-

Distributors and value-added resellers (VARs)

-

System integrators (SIs)

-

Independent software vendors (ISV)

-

Third-party providers

-

Technology providers

Report Objectives

-

To define, describe, and predict the multimodal AI market by offering (solutions and services) data modality, technology, type, vertical, and region

-

To provide detailed information related to major factors (drivers, restraints, opportunities, and industry-specific challenges) influencing the market growth

-

To analyze opportunities in the market and provide details of the competitive landscape for stakeholders and market leaders

-

To forecast the market size of segments for five main regions: North America, Europe, Asia Pacific, the Middle East & Africa, and Latin America

-

To profile key players and comprehensively analyze their market rankings and core competencies

-

To analyze competitive developments, such as partnerships, new product launches, and mergers and acquisitions, in the multimodal AI market.

Available Customizations

With the given market data, MarketsandMarkets offers customizations as per the company’s specific needs. The following customization options are available for the report:

Product Analysis

-

Product matrix provides a detailed comparison of the product portfolio of each company

Geographic Analysis as per Feasibility

-

Further breakup of the North American Multimodal AI Market

-

Further breakup of the European Market

-

Further breakup of the Asia Pacific Market

-

Further breakup of the Middle East & Africa Market

-

Further breakup of the Latin American Multimodal AI Market

Company Information

-

Detailed analysis and profiling of additional market players (up to five)

Growth opportunities and latent adjacency in Multimodal AI Market